Alerting Overview

Overview of Last9's Alerting Capabilities

Last9 comes with complete monitoring support, including alerting and notification capabilities. Irrespective of your tool choice, a few problems plague today's alerting journey — coverage, fatigue, and cleanup. Unfortunately, there are no easy answers to these complex problems.

However, with advanced features like Pattern-based Alerting and a redesigned Alert Manager designed with High Cardinality in mind, Last9 helps you stay ahead.

In addition to being fully PromQL compatible, it provides features like a real-time alert monitor and historical health view. You can also perform advanced tasks, such as correlating them with events while focusing on the desired outcome of keeping up with constantly evolving infrastructure and Services.

In addition to being fully PromQL compatible, it provides features like a real-time alert monitor and historical health view. You can also perform advanced tasks, such as correlating them with events while focusing on the desired outcome of keeping up with constantly evolving infrastructure and Services.

Alerting with Last9 starts by creating an Alert Groups which contain one or more Alert Rules. These Alert Rules evaluate the PromQL queries which are defined as Indicators in the Alert Group. Using Alert Monitor you can view a live updating stream of all your Alert Rules across all Alert Groups.

In the following section we dive deeper into each of these components.

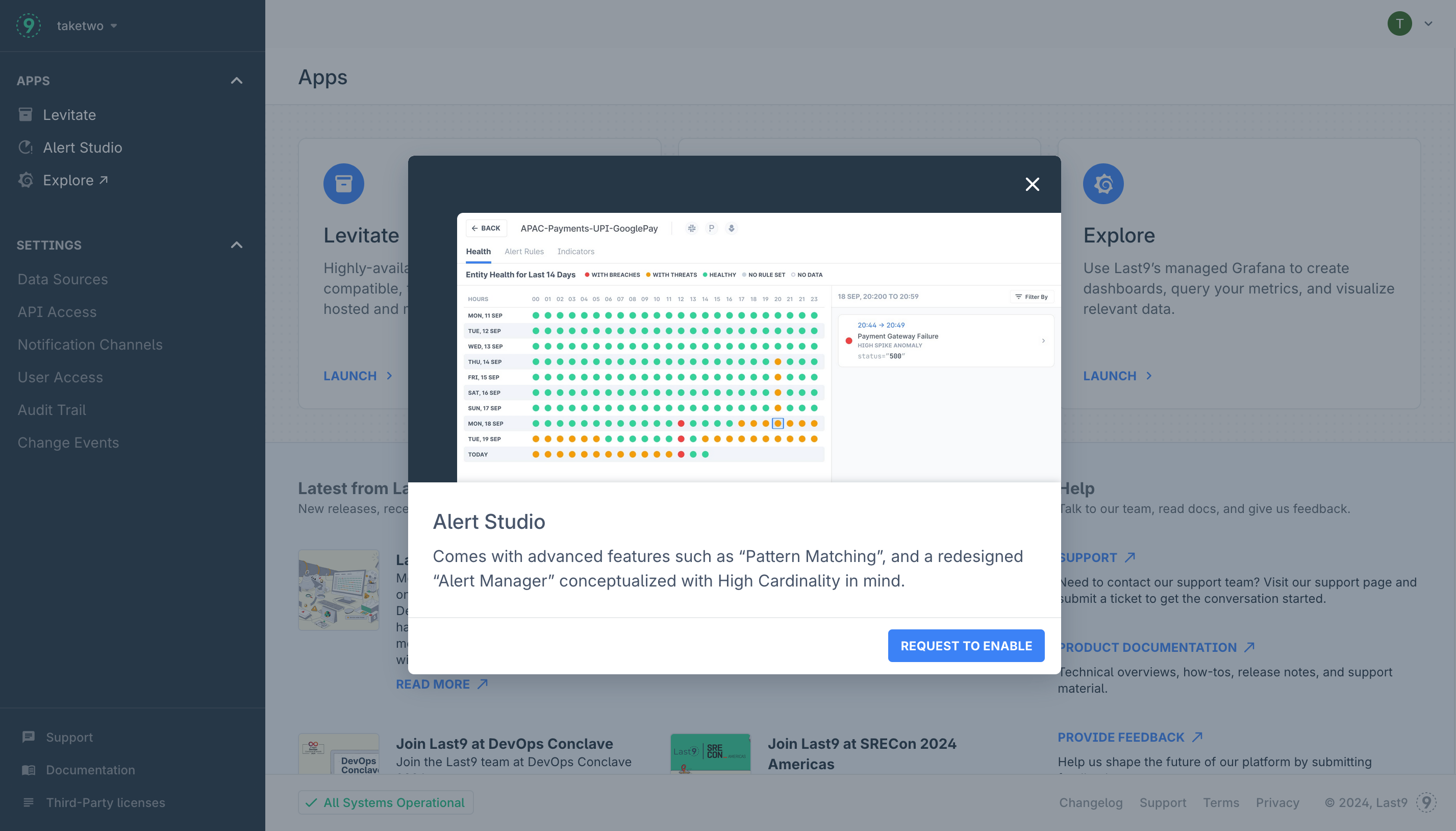

Enabling Alerting Studio

All new orgs needs to request access to Alert Studio, this is a one time action and takes about 30 minutes to get completed (usually done much faster).

To enable Alert Studio:

-

Navigate to Home → Alert Studio and click on the Request To Enable button

-

Once the request has been sent, come back in some time to start using Alert Studio

Troubleshooting

Please get in touch with us on Discord or Email if you have any questions.